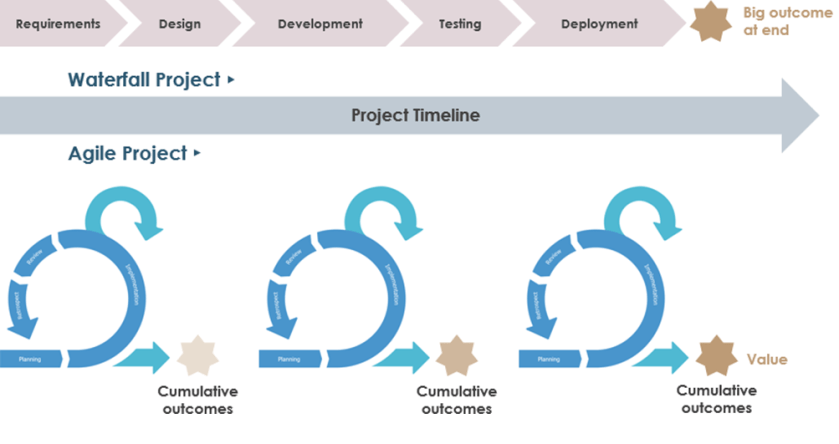

It sounds quite familiar when we have pointed out some functionalities inside Kubernetes as a service on the cloud comparing with others on premise kubernetes solutions. But landing with the Cloud Native Computing Foundation (CNCF) in 2016, K8S came to the scene with open source solutions as Red Hat OpenShift and Suse Rancher. Therefore as you can imagine, Kubernetes doesn’t take that long on the cloud.

To be honest, EKS started on 5th june 2018 and AKS was launched by Microsoft 24th October 2017 . Here we have the AWS EKS current timeline release calendar.

And here, Microsoft approach, AKS timeline release calendar as well.

Upgrade Kubernetes on Azure & AWS

No surprise related to upgrading version for both cloud kubernetes solution – On one hand, with reference to Azure, when you upgrade a supported AKS cluster, Kubernetes minor versions cannot be skipped. On the other hand, because Amazon EKS runs a highly available control plane, you can update only one minor version at a time. Therefore even on the cloud magic doesn´t exist. But also, we have some bad news for the lazy boys.

Related to AKS cluster version allowed, Microsoft says: “If a cluster has been out of support for more than three (3) minor versions and has been found to carry security risks, Azure proactively contacts you to upgrade your cluster. If you do not take further action, Azure reserves the right to automatically upgrade your cluster on your behalf“. So watch out, if you are a lazy boy, as Microsoft can do the job for you :-).

But, what happens to the lazy boys on AWS?. Yes, you knew it. Good guess: ” If any clusters in your account are running the version nearing the end of support, Amazon EKS sends out a notice through the AWS Health Dashboard approximately 12 months after the Kubernetes version was released on Amazon EKS. The notice includes the end of support date.“. Adding to that: “On the end of support date, you can no longer create new Amazon EKS clusters with the unsupported version. Existing control planes are automatically updated by Amazon EKS to the earliest supported version through a gradual deployment process after the end of support date. After the automatic control plane update, make sure to manually update cluster add-ons and Amazon EC2 nodes“.

Moreover, take into account your API versions, if they are deprecated you are in trouble. Please, follow this guide to solve the issue asap.

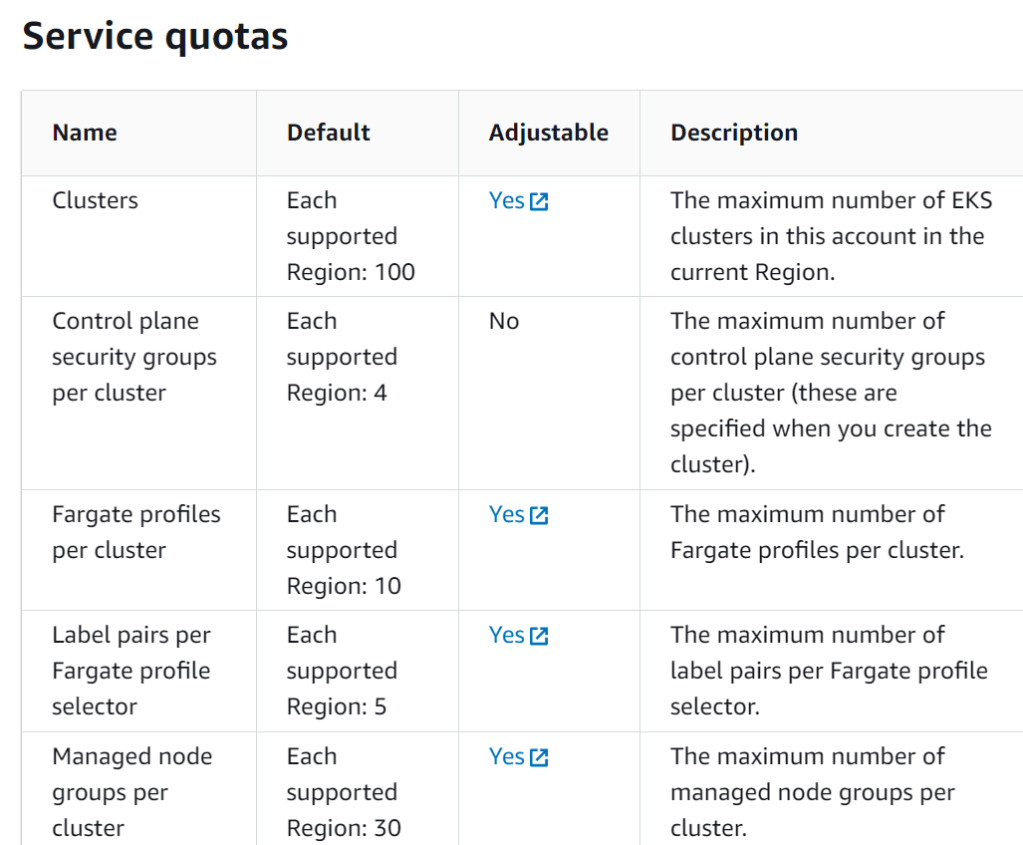

Quotas, Limits and Regions

Regarding service quotas, limits and regions maybe you can draw a line in the sand to know what makes sense for you and your applications. Each hyperscalers have its own capabilities in terms of scalability and resilience. Let´s take a look to this..

MICROSOFT AZURE

AKS offers a strong solutions for your applications…

In terms of region availability, all we´ll be smooth and cheerful if you don´t have to work in China. In this case, if you don´t want to get struggle on this, just verify which region has AKS ready to be roll out.

AWS

Here you can see the EKS approach..

Related to regions, please regarding China ask directly to AWS. In the case of Africa, there isn´t still EKS deployed at the time of writing this post.

To summarize, AWS runs Kubernetes control plane instances across multiple availability zones. It automatically replaces unhealthy nodes and provides scalability and security to applications. It has capabilities for 3,000 nodes for each cluster, but you have to pay for the control plane apart. AWS is more conservative to facilitate kubernetes cluster new versions and maintain the oldest more time.

Meanwhile, Microsoft AKS, just about 1000 nodes per cluster, use to be faster than AWS to provide newer kubernetes versions, can repair unhealthy nodes automatically, and support native GitOps strategies, and integrates azure monitor metrics. Control plane has two flavours depending on your needs, free tier and paid tier.

SECURITY

EKS can encrypt its storage persistent data with a service called AWS KMS which as many know it´s very flexible using customer keys or AWS keys. In the case of AKS use Azure Storage Service Encryption (SSE) by default that encrypts data at rest.

Finally, AKS can take advantages of Azure policies as well as Calico policies.

Anyway, AWS EKS also supports Calico. I hope this article can somehow clarify your vision as a cloud architect, tech guy or just CIO who wants to know where to migrate & refactor their monolith on premise solutions.

Enjoy the journey to the cloud with me…see you then in the next post.