Many companies started the adoption on new technologies such as microservices supported on Kubernetes as a main actor, to provide business agility for new applications deployment but also to facilitate a solid platform for the most critical web services such as shopping portals and booking services, so they have the elasticity to provision resources to meet demand instantly.

Azure has their own flavour in their restaurant, AKS, such as AWS with EKS and GCP with GKE. Today i wanted to show a first approach to the Microsoft solution with advantages, and maybe and depends on the customer vertical, disadvantages.

But first, let´s dive into a traditional opensource + hardware kubernetes deployment and compare it with AKS. Just to show you it´s not exactly the same TCO and ROI.

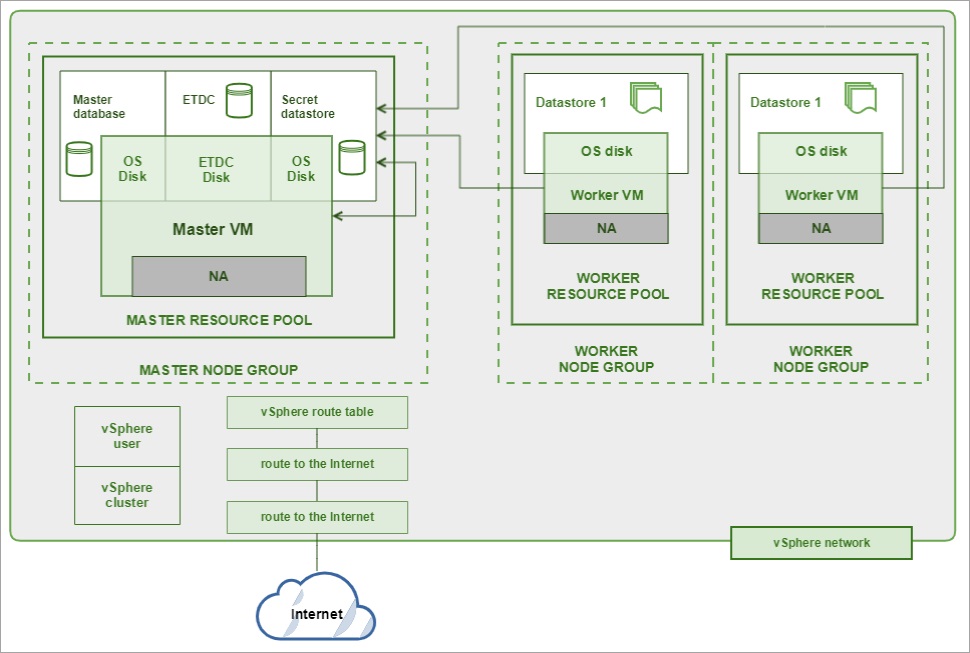

Hardware approach to deploy kubernetes – Some customers do prefer invest in CAPEX, mostly storage and compute, and minimize licensing cost using open source kubernetes solutions. So let´s bring up an estimation on this kind of solution.

On one hand, in average, following for example an opensource company recommendations as Kublr similar to Suse Rancher or Red Hat OpenShift, a cluster (simple scenario with a master node + two worker nodes) has at least these hardware requirements from scratch, without adding applications to run inside:

Master node: Kublr-Kubernetes master components (2 GB, 1.5 vCPU),

Worker node 1: Kublr-Kubernetes worker components (0.7 GB, 0.5 vCPU), Feature: ControlPlane (1.9GB, 1.2 vCPU), Feature: Centralized monitoring (5 GB, 1.2 vCPU) Feature: k8s core components (0.5 GB, 0.15 vCPU) Feature: Centralized logging (11GB, 1.4 vCPU)

Worker node 2: Kublr-Kubernetes worker components (0.7 GB, 0.5 vCPU), Feature: Centralized logging (11GB, 1.4 vCPU)

Obviously, if you want to deploy some applications and depending on their needs, this would increase constantly. The rule on thumb can be:

Available memory = (number of nodes) × (memory per node) – (number of nodes) × 0.7GB – (has Self-hosted logging) × 9GB – (has Self-hosted monitoring) × 2.9GB – 0.4 GB – 2GB (Central monitoring agent per every cluster).

Available CPU = (number of nodes) × (vCPU per node) – (number of nodes) × 0.5 – (has Self-hosted logging) × 1 – (has Self-hosted monitoring) × 1.4 – 0.1 – 0.7 (Central monitoring agent per every cluster).

*By default, Kublr disables scheduling business application on the master, which can be modified. Thus, they use only worker nodes in our formula.

Adding to this, take into account to buy some Vmware Vsphere licenses + Vdirector and Hardware + maintenance.

Some Key Takeaways for on premise deployment

- SLA depends on the number of clusters with master and working nodes, their hardware profile, if you are using HCI (for example VMware simply integrated Kubernetes with its hypervisor to serve HCI if needed. The solution is called TANZU). Maybe you can set up a 99999 scenario, though quite expensive.

- CAPEX is always good for some companies as they reduce taxes. But deal with the purchase department sometimes it´s needed if you want to leverage cloud benefits as well.

- Open source instead of vendors as Wmware or IBM, provides more flexibility to use K8s, with not just one vendor configuration by default but a flexible configurations

- Watch up performance, underutilization, security and disaster recovery. They are all quite challenging and usual to find in those scenarios.

- On premise approach maybe it´s a good option if you want to customize your applications with some specific CI/CD tools and plugins for example for Jenkins.

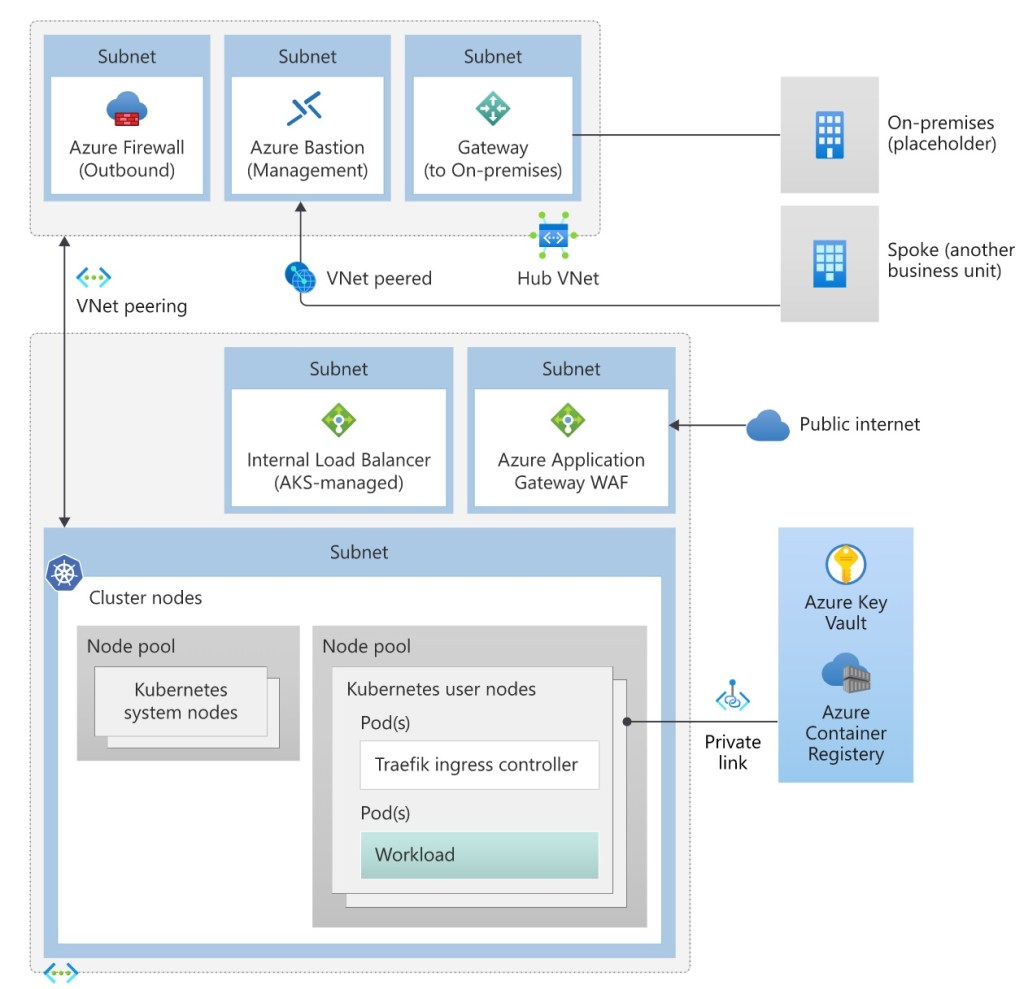

AKS approach to deploy kubernetes on the cloud- Microsoft´s alternative provides a cluster with a master node and as many worker nodes as needed with the right hardware profiles, even scaling out or down depending on customers scenarios, what is per se, more flexible that a traditional on premise option.

On the other hand, AKS is an approach that brings the best of Kubernetes but reduce the investment and its pure OPEX. We can pointed out some excellent benefits comparing with an on premise Kubernetes solution. Azure Kubernetes Service (AKS) Baseline Cluster reference would be the following approach.

Some Key Takeaways for AKS deployment

There are no costs associated for AKS in deployment, management, and operations of the Kubernetes cluster. The main cost driver is the virtual machine instances, storage, and networking resources consumed by the cluster. Consider choosing cheaper VMs for system node pools and mainly Linux as possible.

- We can start deploying a cluster with a master node and two worker nodes. The cost exist mostly on worker nodes compute where Microsoft recommends DS2_v2 instances, so pay attention on how many name spaces do you need and how many pods are included for each application deployment.

- Adding to that, we have to consider, persistent storage (pods have an ephemeral storage, remember), BBDD requirements associated with each application and to conclude, network traffic between Azure to on premise.

- Some clear advantages are:

- On premise Kubernetes investment use to be an oversized cluster. AKS can be flexible and scalable to meet the current business expectations, provision a cluster with minimum number of nodes and enable the cluster autoscaler to monitor and make sizing decisions.

- Balance pod requests and limits to allow Kubernetes to allocate worker node resources with higher density so that Azure hardware (under the hood) is utilized to cloud paid capacity

- Use reserve instances for worker nodes for one or three years or even use Dev/Test plan to reduce cost for AKS on development or pre-production environments.

- Automatize, automatize, automatize. We can deployed AKS infra from scratch with some few clicks. For example with Bicep, ARM, etc.

- AKS can be work in a multi-region approach. Anyway, data transfers within availability zones of a region are not free. Microsoft says clearly that if your workload is multi-region or there are transfers across billing zones, then expect additional bandwidth cost.

- GITOPS for AKS ready. As many of you know, GitOps provides some best practices like version control, collaboration, compliance, and continuous integration/continuous deployment (CI/CD) to infrastructure automation.

- Finally, if you figure out how to facilite governance to all you Azure Kubernetes scenarios, Azure Monitor (using container insight overview) provides a multi-cluster view that shows the health status of all monitored Kubernetes clusters running Linux and Windows Server 2019. Moreover, we can leverage native azure tools such as kusto queries, azure cost management or azure advisor to control your K8S cost.

In the next post we will be focus on EKS and ECS in AWS environments. We´ll see how to identify the TCO and ROI for both K8S solutions.

Enjoy the journey to the cloud with me…see you then in the next post.