The drums are beating, can you hear them?. But I don’t know where the sound is coming from. It’s thunderous, ringing in my ears…boom,boom…pause,..boom,boom. Like elephant heartbeats…

It is Cloudmanji!. A game that I don’t like to play as a financial manager, as a specialist in the purchasing department although i have been invited without wanting to attend that appointment.

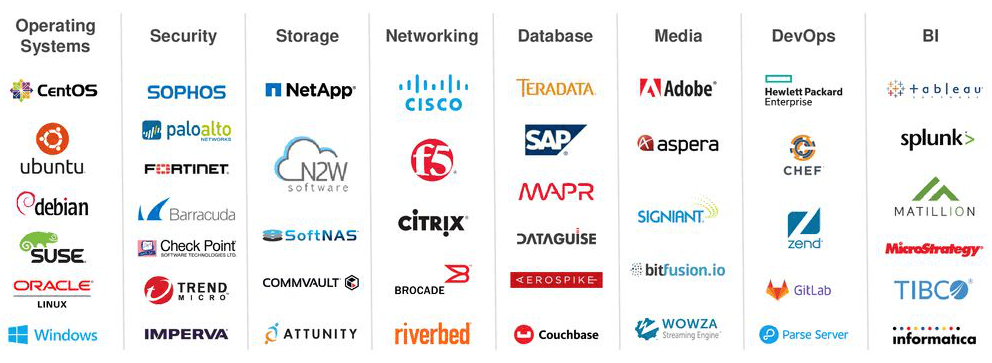

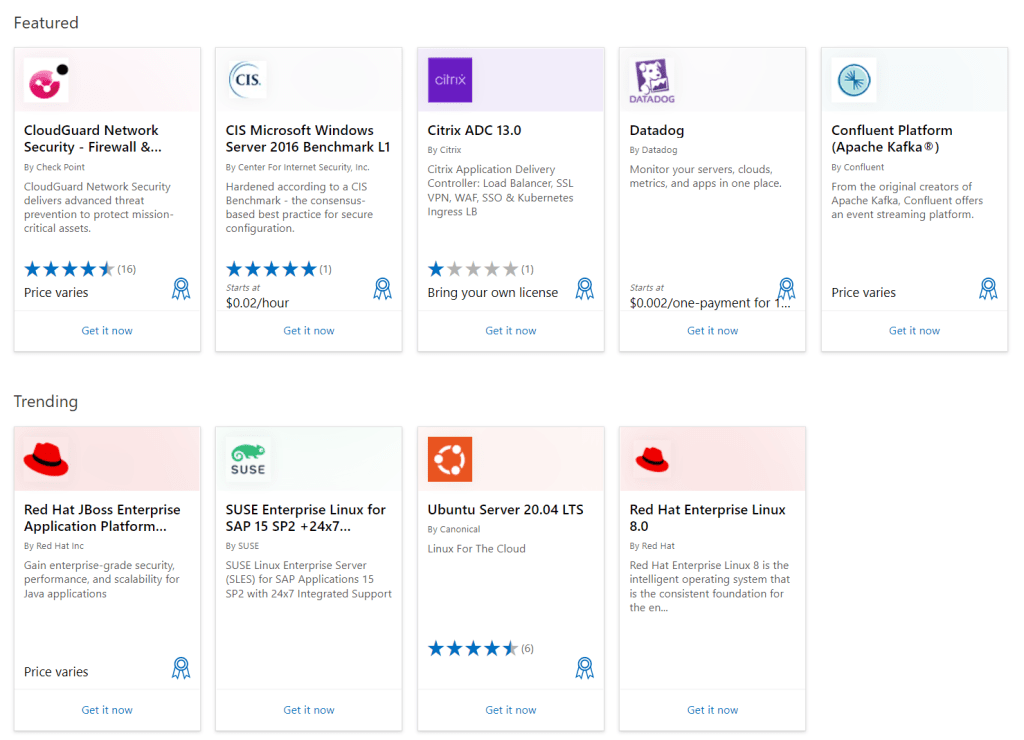

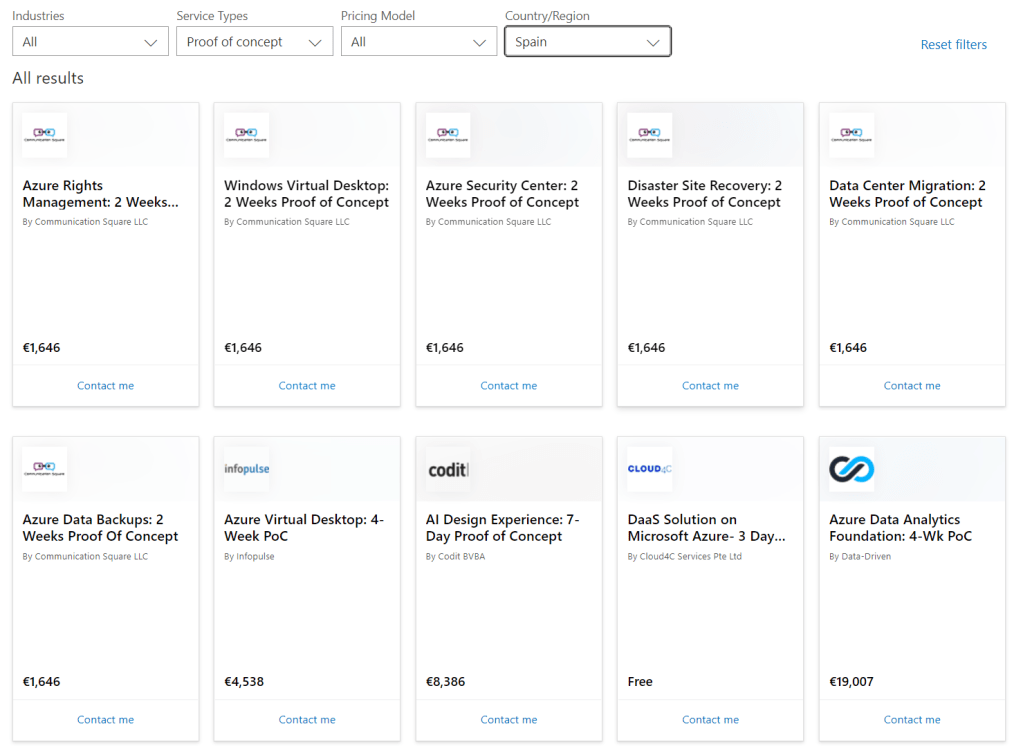

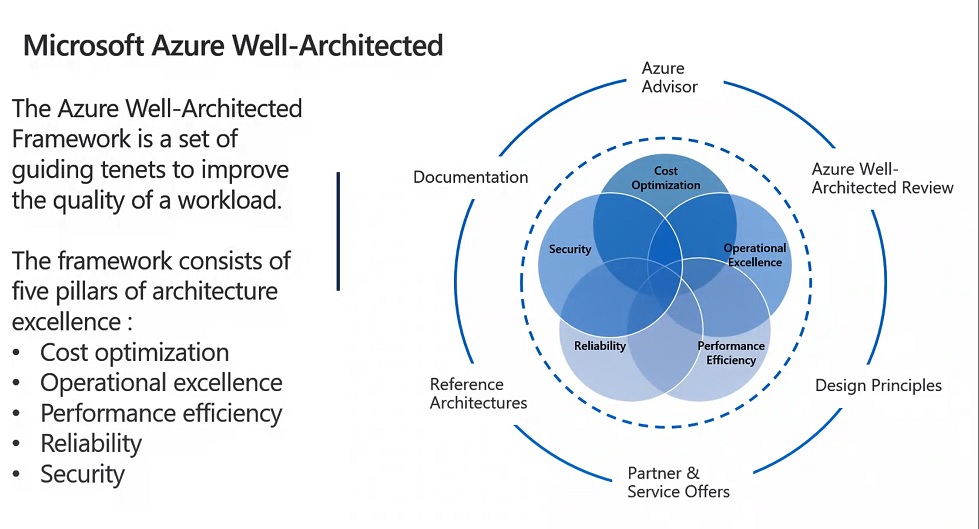

OPEX is all around your work. It´s a new jungle where CAPEX is coming off the board. You are buying software subscriptions, Software as a Service (software that you can consume but you don´t need to install or maintain), Cloud infrastructure as PAYG (Pay as You Go), software products within the marketplace of your favourited Hyperscale. Moreover, others are buying, likely someone in the IT department, those software solutions and you just received invoices with not explanations at all.

Therefore, there are “Silos” in your company where not everybody is aligned about cloud spending or maybe the cloud adoption framework was rolled out to be focused on some “Personas” and business areas but not all stakeholders that should be involved in cloud projects.

First beat of drums

Cost Spike in the top one. This kind of scenario usually comes out of “Data Analytics” or “Big Data” Labs or Proof of concept aimed to analyse some specific information in order to take quick business decisions. Sponsor could be HR, Marketing or PMO directly. CIO is aligned with those guys, but he can´t figure out what comes next.

Sometimes happens because a junior consultant is responsable for deploying the prototype infrastructure on AWS, Oracle cloud or Azure as he just follows a default configuration which in many cases means to choose a “Premium Tier” for storage or data bases. Adding to that, there was no governance at all regarding what IT guys can do or can´t do.

The outcome is an unexpected invoice to Finance which means a spot in the budget for the big fish companies and a “cash burn out” for a small one or for a Startup.

The CFO wants to cut heads and he doesn´t know where to start. Who was guilty?, if any?, Who did the things wrong?. Where to start to fire up your team and see the cloud as an alliance?.

Second beat of drums

In the top two, an orphan and shared cost for the company, expensive and necessary, a cost which nobody wants to be assigned in their cost center code.

How do you split this kind of cost for several departments or countries?. Let´s say, you have several factories in your country, four in France, and even worst, two more in Spain, one in Portugal and UK. Due to the brexit and the currency things get extraordinary complex as you need to invoice in Euros and Pounds. There are also withholding taxes between Europe and UK.

You started with an application modernization strategy and migrated legacy applications to Azure. Application refactoring, (changing code and decoupling of architecture in small pieces called microservices) improved the deployment and scalation on demand to all those factories, supplying a quick and effective support for assembly line modifications.

All the factories need that cloud infrastructure and it´s critical and means a shared cost to all of them. It´s a complex cost which you can´t estimate properly. A Production, preproduction and development deployed in a multi-region approach. UK says France should pay the bill as they are the head quarter. Spain and Portugal say they can´t pay the bill as they have a smaller market than others and less profitable. France says cost should be splitted into euros (hence they pay France, Spain and Portugal) and into pounds. They say UK pays their factory in pounds and assumes all related to Brexit as withholding taxes.

How do you allocate cost in the cloud for such infrastructure?. How to estimate the appropriate average consumption for each factory?. How do you align Finance, IT guys and the Board to be on the same page and work together to find out a solution?

Third beat of drums

A company jumped into the cloud. They migrated three on premise data centers on premise. No cloud adoption was put on the table ended up in a bunch of solutions with no adequate scalation, security issues and no governance or cost allocate at all. A caos or nightmare that each new CIO has to cope with.

Not to mention, the company has workloads in two different hyperscalers. For instance, GCP and Azure.

After four CIOs and two CISOs went through the company, who is in charge of this scenario?. Finance says the situation is terrifying and horrible. No clear budgets, no budget alerts, no cost allocation, etc. How to deal with OPEX?.

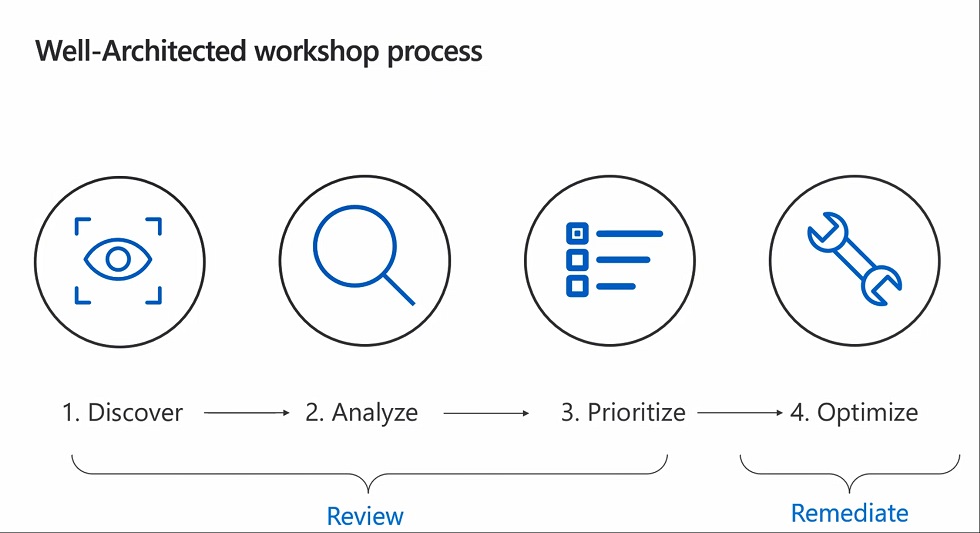

To sum up, these scenarios are covered by FINOPS. This is a methodology where you are going to work with the IT cloud engineers, the CIO, your devops team, your Purchase department, Finance, PMO and some skilled people called Finops specialist.

In the next Cloudmanji episode, i´ll explain what it´s all about this approach and how you can leverage the methodology to deal with all these situations.

Dear CFOs, purchasing managers and IT guys enjoy the journey to the cloud with me…see you then in the next post.